PCL/OpenNI tutorial 4: 3D object recognition (descriptors)

Go to root: PhD-3D-Object-Tracking

It is time to learn the basics of one of the most interesting applications of point cloud processing: 3D object recognition. Akin to 2D recognition, this technique relies on finding good keypoints (characteristic points) in the cloud, and matching them to a set of previously saved ones. But 3D has several advantages over 2D: namely, we will be able to estimate with decent accuraccy the exact position and orientation of the object, relative to the sensor. Also, 3D object recognition tends to be more robust to clutter (crowded scenes where objects in the front occluding objects in the background). And finally, having information about the object's shape will help with collision avoidance or grasping operations.

In this first tutorial we will see what descriptors are, how many types are there available in PCL, and how to compute them.

Overview

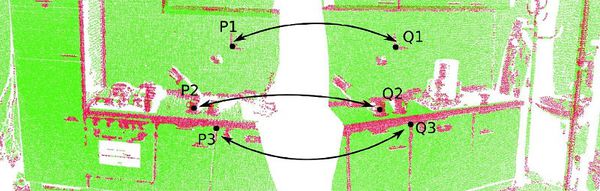

The basis of 3D object recognition is to find a set of correspondences between two different clouds, one of them containing the object we are looking for. In order to do this, we need a way to differentiate them in an unambigous manner. Until now, we have worked with points that store the XYZ coordinates, the RGB color... but none of those properties are unique enough. In two sequential scans, two points could share the same coordinates despite belonging to different surfaces, and using the color information takes us back to 2D recognition, will all the lightning related problems.

In a previous tutorial, we talked about features, before introducing the normals. Normals are an example of feature, because they encode information about the vicinity of the point. That is, the neighboring points are taken into account when computing it, giving us an idea of how the surrounding surface is. But this is not enough. For a feature to be optimal, it must meet the following criteria:

- It must be robust to transformations: rigid transformations (the ones that do not change the distance between points) like translations and rotations must not affect the feature. Even if we play with the cloud a bit beforehand, there should be no difference.

- It must be robust to noise: measurement errors that cause noise should not change the feature estimation much.

- It must be resolution invariant: if sampled with different density (like after performing downsampling), the result must be identical or similar.

This is where descriptors come in. They are more complex (and precise) signatures of a point, that encode a lot of information about the surrounding geometry. The purpose is to unequivocally identify a point across multiple point clouds, no matter the noise, resolution or transformations. Also, some of them capture additional data about the object they belong to, like the viewpoint (that lets us retrieve the pose).

Go to root: PhD-3D-Object-Tracking

Links to articles:

PCL/OpenNI tutorial 0: The very basics

PCL/OpenNI tutorial 1: Installing and testing

PCL/OpenNI tutorial 2: Cloud processing (basic)

PCL/OpenNI tutorial 3: Cloud processing (advanced)

PCL/OpenNI tutorial 4: 3D object recognition (descriptors)