PCL/OpenNI tutorial 5: 3D object recognition (pipeline)

Go to root: PhD-3D-Object-Tracking

In our previous article we saw how 3D descriptors could be used to identify points or clusters. But in order to have a working object recognition system, many more things are necessary. The sequence of steps that we have to implement to make such a system is known as the pipeline. This final article will explain how to do it. The scope of what we will talk about is very wide and many has been written, so you should consider this a simple introductory tutorial, to build a basic knowledge so you can experiment further.

Contents

Overview

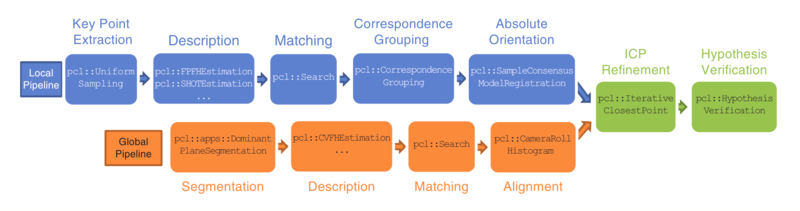

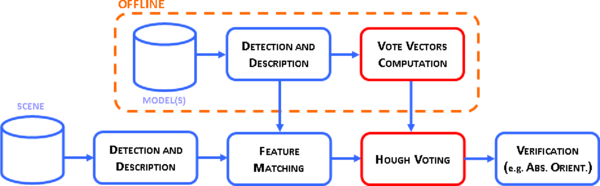

Ideally, a 3D object recognition system should be able to grab clouds from the device, preprocess them, compute descriptors, compare them with the ones stored in our object database, and output all matches with their position and orientation in the scene, in real time. Several components must then be implemented to perform these sequential steps, each one taking as input the output of the previous. This pipeline will be different depending on what type of descriptor we are using: local or global.

First of all, we have to train the system. Training means, in this case, creating a database will all the objects we want to be able to recognize. Only after that we can implement the recognition pipeline. Also, there are some postprocessing steps that are not mandatory to perform but will yield better results if done, like pose refinement and hypothesis verification.

In the next sections we will go over every step, with some PCL code snippets, as always.

- Publication:

- Point Cloud Library: Three-Dimensional Object Recognition and 6 DoF Pose Estimation (Aitor Aldoma et al., 2012)

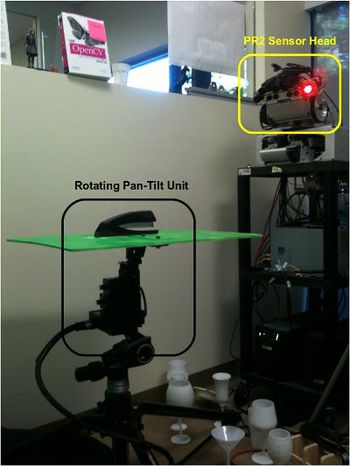

Training

As stated, we require a database with all the objects we intend to recognize later (it is possible to build a recognition system that works with object categories and high level descriptions, for example to find all things that "look" like a chair in the scene, but that is an entirely different matter). The most common way to do this is to take dozens of snapshots of every object from different angles. This can be done with a device such as a pan-tilt unit (which consists of a mechanical platform that can be rotated on two axes in known, precise amounts), or with a common table and a printed checkerboard pattern on it, to be used as ground truth to get the camera position and orientation.

Another possibility is to do it virtually, rendering 3D models of the objects (modelled beforehand with CAD software) and using the Z buffer, which contains the depth information, to simulate the input a depth sensor would give. Many 3D object datasets that are available for download have been created with one of these methods. Whichever you choose, be sure to be consistent and take all snapshots uniformly, with the same sampling level (one every X degrees). When choosing this amount, try to make a compromise between the complexity of the database (size is usually not a problem, but the more snapshots you have, the longer it will take to finish the matching step) and the precision of pose estimation.

After this, the desired descriptor(s) must be computed for every snapshot of each object, and saved to disk. If global descriptors are being used, a Camera Roll Histogram (CRH) should be included in order to retrieve the full 6 DoF pose, as many descriptors are invariant to the camera roll angle, which would limit the pose estimation to 5 DoF. Finally, ground truth information about the camera position and orientation will make it possible to compare it against the result given back by the recognition system. Most datasets include such information in text files next to the corresponding snapshot, usually in the form of a 3x3 rotation matrix and a 3D translation vector.

If you are using local descriptors, it is possible to work with a database of full objects, instead of partial snapshots (this can not be done with global descriptors because they are computed from the whole cluster, and a full object is something we would never get in practice; plus, they also encode viewpoint information). To do this, you would have to capture the object from all angles and then use registration or other technique to stitch the clouds together in order to create a complete, continous model. Resampling should also be needed because overlapping areas would have higher point density. Also, as normals are oriented according to the viewpoint when they are computed, you would have to merge them properly so they all point outwards, instead of recomputing them after composing the object cluster. The perk of using full objects is that the matching step will be shorter, as the system will not have to check against the descriptors of multiple snapshots.

Local pipeline

The following sections enumerate and explain the steps that compose the object recognition pipeline when using local descriptors.

Keypoint extraction

In this step, we have to decide which points from the current cloud will be considered keypoints (and have the local descriptor computed for them). The main characteristics of a good keypoint detector are:

- Repeatability: there should be a good chance of the same points being chosen over several iterations, even when the scene is captured from a different angle.

- Distinctiveness: the chosen keypoints should be highly characterizing and descriptive. It should be easy to describe and match them.

Because the second one depends on the local descriptor being used (and how the feature is computed), a different keypoint detection technique would have to be implemented for each. Another simple alternative is to perform downsampling on the cloud, and use all remaining points. This can be done easily with a voxel grid. Follow the link to find a code snippet that you can adapt to use in your system.

Computing descriptors

The next step is to compute the desired local descriptor(s) for every keypoint. The input parameters vary from one to another, and their efectiveness depends on the scene we are capturing and the objects we are working with, so I can not give you an ideal solution. Check the list of available descriptors, choose the one you deem best, and study the original publication to understand how it works and how the computation can be tuned to your needs, with the use of parameters (and try to understand how changing them affects the output). Refer to the given code snippets for each.

Matching

After the local descriptor has been computed for every keypoint in the cloud, we have to match them, finding correspondences with the ones we have stored in our object database. For this, a search structure like a k-d tree can be used to perform a nearest neighbor search, retrieving the Euclidean distances between descriptors (and optionally, enforcing a maximum distance value as a threshold). Every descriptor in the scene should be matched against the descriptors of every model in the database, because this accounts for the presence of multiple instances of the model, which would not be recognized if we did it the other way around.

The following code sample shows how to find all point correspondences between the cloud and a model:

#include <pcl/io/pcd_io.h>

#include <pcl/features/normal_3d.h>

#include <pcl/features/shot.h>

int

main(int argc, char** argv)

{

// Object for storing the SHOT descriptors for the scene.

pcl::PointCloud<pcl::SHOT352>::Ptr sceneDescriptors(new pcl::PointCloud<pcl::SHOT352>());

// Object for storing the SHOT descriptors for the model.

pcl::PointCloud<pcl::SHOT352>::Ptr modelDescriptors(new pcl::PointCloud<pcl::SHOT352>());

// Read the already computed descriptors from disk.

if (pcl::io::loadPCDFile<pcl::SHOT352>(argv[1], *sceneDescriptors) != 0)

{

return -1;

}

if (pcl::io::loadPCDFile<pcl::SHOT352>(argv[2], *modelDescriptors) != 0)

{

return -1;

}

// A kd-tree object that uses the FLANN library for fast search of nearest neighbors.

pcl::KdTreeFLANN<pcl::SHOT352> matching;

matching.setInputCloud(modelDescriptors);

// A Correspondence object stores the indices of the query and the match,

// and the distance/weight.

pcl::CorrespondencesPtr correspondences(new pcl::Correspondences());

// Check every descriptor computed for the scene.

for (size_t i = 0; i < sceneDescriptors->size(); ++i)

{

std::vector<int> neighbors(1);

std::vector<float> squaredDistances(1);

// Ignore NaNs.

if (pcl_isfinite(sceneDescriptors->at(i).descriptor[0]))

{

// Find the nearest neighbor (in descriptor space)...

int neighborCount = matching.nearestKSearch(sceneDescriptors->at(i), 1, neighbors, squaredDistances);

// ...and add a new correspondence if the distance is less than a threshold

// (SHOT distances are between 0 and 1, other descriptors use different metrics).

if (neighborCount == 1 && squaredDistances[0] < 0.25f)

{

pcl::Correspondence correspondence(neighbors[0], static_cast<int>(i), squaredDistances[0]);

correspondences->push_back(correspondence);

}

}

}

std::cout << "Found " << correspondences->size() << " correspondences." << std::endl;

}

Correspondence grouping

Right now, all we have is a list of correspondences between keypoints in the scene and keypoints from some object(s) in the database. This does not necessarily mean that a given object is present in the scene. Consider this example: a box with two keypoints located on opposite corners. The distance between the points is known. The matching stage has found two keypoints in the scene with very similar descriptors, but as it turns out, the distance between them is way different. Unless we are taking non-rigid transformations into account (such as scaling), then we have to discard the idea that those points belong to the object.

This check can be implemented with an additional step called correspondence grouping. Like the name states, it groups correspondences that are geometrically consistent (for the given object model) in clusters, and discards the ones that do not. Rotations and translations are permitted, but anything other than that will not meet the criteria. Also, as a minimum of 3 correspondences are required for retrieving the 6 DoF pose, groups with less than that can be ignored.

PCL offers a couple of classes to perform correspondence grouping.

Geometric consistency

This is the simplest method. It just iterates over all feature correspondences not yet grouped, and adds them to the current subset if they are geometrically consistent. The set resolution can be tuned to make the algorithm more or less forgiving when enforcing the consistency. For every subset (which should correspond with an instance of the model), this PCL class also computes the transformation (rotation and translation), making the next step (pose estimation) unnecessary.

#include <pcl/io/pcd_io.h>

#include <pcl/correspondence.h>

#include <pcl/recognition/cg/geometric_consistency.h>

int

main(int argc, char** argv)

{

// Objects for storing the keypoints of the scene and the model.

pcl::PointCloud<pcl::PointXYZ>::Ptr sceneKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr modelKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

// Objects for storing the unclustered and clustered correspondences.

pcl::CorrespondencesPtr correspondences(new pcl::Correspondences());

std::vector<pcl::Correspondences> clusteredCorrespondences;

// Object for storing the transformations (rotation plus translation).

std::vector<Eigen::Matrix4f, Eigen::aligned_allocator<Eigen::Matrix4f> > transformations;

// Read the keypoints from disk.

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[1], *sceneKeypoints) != 0)

{

return -1;

}

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[2], *modelKeypoints) != 0)

{

return -1;

}

// Note: here you would compute the correspondences.

// It has been omitted here for simplicity.

// Object for correspondence grouping.

pcl::GeometricConsistencyGrouping<pcl::PointXYZ, pcl::PointXYZ> grouping;

grouping.setSceneCloud(sceneKeypoints);

grouping.setInputCloud(modelKeypoints);

grouping.setModelSceneCorrespondences(correspondences);

// Minimum cluster size. Default is 3 (as at least 3 correspondences

// are needed to compute the 6 DoF pose).

grouping.setGCThreshold(3);

// Resolution of the consensus set used to cluster correspondences together,

// in metric units. Default is 1.0.

grouping.setGCSize(0.01);

grouping.recognize(transformations, clusteredCorrespondences);

std::cout << "Model instances found: " << transformations.size() << std::endl << std::endl;

for (size_t i = 0; i < transformations.size(); i++)

{

std::cout << "Instance " << (i + 1) << ":" << std::endl;

std::cout << "\tHas " << clusteredCorrespondences[i].size() << " correspondences." << std::endl << std::endl;

Eigen::Matrix3f rotation = transformations[i].block<3, 3>(0, 0);

Eigen::Vector3f translation = transformations[i].block<3, 1>(0, 3);

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(0, 0), rotation(0, 1), rotation(0, 2));

printf("\t\tR = | %6.3f %6.3f %6.3f | \n", rotation(1, 0), rotation(1, 1), rotation(1, 2));

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(2, 0), rotation(2, 1), rotation(2, 2));

std::cout << std::endl;

printf("\t\tt = < %0.3f, %0.3f, %0.3f >\n", translation(0), translation(1), translation(2));

}

}

If you do not need to compute the transformations (or prefer to do it later in its own step), you can use the "cluster()" function instead of the "recognize()" one, which only takes one parameter (the object for the clustered correspondences).

- Input: Points (model), Points (scene), Correspondences, Minimum cluster size, Consensus set resolution

- Output: Clustered correspondences, [Transformation]

- Tutorial: 3D Object Recognition based on Correspondence Grouping

- API: pcl::GeometricConsistencyGrouping

- Download

Hough voting

This method is a bit more complex. It uses a technique known as Hough transform, which was originally devised to perform line detection on 2D images, though it was later expanded to work with arbitrary shapes or higher dimensions. The method works with a voting procedure, with votes being cast on candidates that are found to be better suitable. If enough votes are accumulated for a given position in the space, then the shape is considered "found" there and its parameters are retrieved.

The voting procedure is carried out in a parameter space, which would have 6 dimensions in case a full pose needed to be estimated (rotation plus translation). Because that would be computationally expensive, the method implemented in PCL uses only a 3D Hugh space, and employs local Reference Frames (RFs) to account for the remaining 3 DoF. Its computation can then be divided in two steps.

Computing Reference Frames

For every pair of correspondences, a local Reference Frame must be computed to retrieve the pose later. PCL offers a class that implements the BOrder Aware Repeatable Directions (BOARD) algorithm.

#include <pcl/io/pcd_io.h>

#include <pcl/features/board.h>

int

main(int argc, char** argv)

{

// Objects for storing the point clouds.

pcl::PointCloud<pcl::PointXYZ>::Ptr sceneCloud(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr modelCloud(new pcl::PointCloud<pcl::PointXYZ>);

// Objects for storing the normals.

pcl::PointCloud<pcl::Normal>::Ptr sceneNormals(new pcl::PointCloud<pcl::Normal>);

pcl::PointCloud<pcl::Normal>::Ptr modelNormals(new pcl::PointCloud<pcl::Normal>);

// Objects for storing the keypoints.

pcl::PointCloud<pcl::PointXYZ>::Ptr sceneKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr modelKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

// Objects for storing the Reference Frames.

pcl::PointCloud<pcl::ReferenceFrame>::Ptr sceneRF(new pcl::PointCloud<pcl::ReferenceFrame>);

pcl::PointCloud<pcl::ReferenceFrame>::Ptr modelRF(new pcl::PointCloud<pcl::ReferenceFrame>);

// Read the scene and model clouds from disk.

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[1], *sceneCloud) != 0)

{

return -1;

}

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[2], *modelCloud) != 0)

{

return -1;

}

// Note: here you would estimate the normals for the whole cloud, and choose

// the keypoints. It has been omitted here for simplicity.

// BOARD RF estimation object.

pcl::BOARDLocalReferenceFrameEstimation<pcl::PointXYZ, pcl::Normal, pcl::ReferenceFrame> rf;

// Search radius (maximum distance of the points used to estimate the X and Y axes

// of the BOARD Reference Frame for a given point).

rf.setRadiusSearch(0.02f);

// Check if support is complete, or has missing regions because it is too close to mesh borders.

rf.setFindHoles(true);

rf.setInputCloud(sceneKeypoints);

rf.setInputNormals(sceneNormals);

rf.setSearchSurface(sceneCloud);

rf.compute(*sceneRF);

rf.setInputCloud(modelKeypoints);

rf.setInputNormals(modelNormals);

rf.setSearchSurface(modelCloud);

rf.compute(*modelRF);

}

Be sure to check the API reference because the BOARD estimation object has many more parameters to tune.

- Input: Points, Normals, Search radius, [Tangent radius], [Margin array size], [Margin threshold], [Find holes], [Hole size threshold], [Steepness threshold]

- Output: Reference Frames

- Tutorial: 3D Object Recognition based on Correspondence Grouping

- Publication: On the repeatability of the local reference frame for partial shape matching (Alioscia Petrelli and Luigi Di Stefano, 2011)

- API: pcl::BOARDLocalReferenceFrameEstimation

- Download

Clustering

#include <pcl/io/pcd_io.h>

#include <pcl/recognition/cg/hough_3d.h>

int

main(int argc, char** argv)

{

// Objects for storing the keypoints of the scene and the model.

pcl::PointCloud<pcl::PointXYZ>::Ptr sceneKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr modelKeypoints(new pcl::PointCloud<pcl::PointXYZ>);

// Objects for storing the Reference Frames.

pcl::PointCloud<pcl::ReferenceFrame>::Ptr sceneRF(new pcl::PointCloud<pcl::ReferenceFrame>);

pcl::PointCloud<pcl::ReferenceFrame>::Ptr modelRF(new pcl::PointCloud<pcl::ReferenceFrame>);

// Objects for storing the unclustered and clustered correspondences.

pcl::CorrespondencesPtr correspondences(new pcl::Correspondences());

std::vector<pcl::Correspondences> clusteredCorrespondences;

// Object for storing the transformations (rotation plus translation).

std::vector<Eigen::Matrix4f, Eigen::aligned_allocator<Eigen::Matrix4f> > transformations;

// Read the keypoints from disk.

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[1], *sceneKeypoints) != 0)

{

return -1;

}

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[2], *modelKeypoints) != 0)

{

return -1;

}

// Note: here you would compute the correspondences and the Reference Frames.

// It has been omitted here for simplicity.

// Object for correspondence grouping.

pcl::Hough3DGrouping<pcl::PointXYZ, pcl::PointXYZ, pcl::ReferenceFrame, pcl::ReferenceFrame> grouping;

grouping.setInputCloud(modelKeypoints);

grouping.setInputRf(modelRF);

grouping.setSceneCloud(sceneKeypoints);

grouping.setSceneRf(sceneRF);

grouping.setModelSceneCorrespondences(correspondences);

// Minimum cluster size. Default is 3 (as at least 3 correspondences

// are needed to compute the 6 DoF pose).

grouping.setHoughThreshold(3);

// Size of each bin in the Hough space.

grouping.setHoughBinSize(3);

// If true, the vote casting procedure will interpolate the score

// between neighboring bins in the Hough space.

grouping.setUseInterpolation(true);

// If true, the vote casting procedure will use the correspondence's

// weighted distance to compute the Hough voting score.

grouping.setUseDistanceWeight(false);

grouping.recognize(transformations, clusteredCorrespondences);

std::cout << "Model instances found: " << transformations.size() << std::endl << std::endl;

for (size_t i = 0; i < transformations.size(); i++)

{

std::cout << "Instance " << (i + 1) << ":" << std::endl;

std::cout << "\tHas " << clusteredCorrespondences[i].size() << " correspondences." << std::endl << std::endl;

Eigen::Matrix3f rotation = transformations[i].block<3, 3>(0, 0);

Eigen::Vector3f translation = transformations[i].block<3, 1>(0, 3);

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(0, 0), rotation(0, 1), rotation(0, 2));

printf("\t\tR = | %6.3f %6.3f %6.3f | \n", rotation(1, 0), rotation(1, 1), rotation(1, 2));

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(2, 0), rotation(2, 1), rotation(2, 2));

std::cout << std::endl;

printf("\t\tt = < %0.3f, %0.3f, %0.3f >\n", translation(0), translation(1), translation(2));

}

}

If you do not give the Reference Frames to the Hough grouping object, it will calculate them itself, but then you need to specify additional parameters. See the API for more details. Also, you can "cluster()" instead of "recognize()" if you want to skip the pose estimation now.

- Input: Points (model), Points (scene), Correspondences, Reference Frames (model), Reference Frames (scene), Hough bin size, Hough threshold, [Use distance weight], [Use interpolation]

- Output: Clustered correspondences, [Transformation]

- Tutorial: 3D Object Recognition based on Correspondence Grouping

- Publication: Object recognition in 3D scenes with occlusions and clutter by Hough voting (Federico Tombari and Luigi Di Stefano, 2010)

- API: pcl::Hough3DGrouping

- Download

Pose estimation

The two correspondence grouping classes included in PCL already compute the full pose, but you can do it manually if you want, or if you want to refine the results of the previous step (removing correspondences that are not compatible with the same 6 DoF pose). You can use a method like RANSAC (RANdom SAmple Consensus), to get the rotation and translation of the rigid transformation that best fits the correspondences of a model instance. In a previous tutorial we mentioned that there was a technique called feature-based registration which was an alternative to ICP, and this is it.

Of course, PCL has some classes for this. The one we are going to see adds a prerejection step to the RANSAC loop, in order to discard hypotheses that are probably wrong. To do this, the algorithm forms polygons with the points of the input sets, and then checks pose-invariant geometrical constraints. To be precise, it makes sure that the polygon edges are of similar length. You can let it run for a specified number of iterations (as, like you already know, RANSAC never actually converges), or set a threshold.

#include <pcl/io/pcd_io.h>

#include <pcl/features/shot.h>

#include <pcl/registration/sample_consensus_prerejective.h>

int

main(int argc, char** argv)

{

// Objects for storing the scene and the model.

pcl::PointCloud<pcl::PointXYZ>::Ptr scene(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr model(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr alignedModel(new pcl::PointCloud<pcl::PointXYZ>);

// Objects for storing the SHOT descriptors for the scene and model.

pcl::PointCloud<pcl::SHOT352>::Ptr sceneDescriptors(new pcl::PointCloud<pcl::SHOT352>());

pcl::PointCloud<pcl::SHOT352>::Ptr modelDescriptors(new pcl::PointCloud<pcl::SHOT352>());

// Read the clouds from disk.

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[1], *scene) != 0)

{

return -1;

}

if (pcl::io::loadPCDFile<pcl::PointXYZ>(argv[2], *model) != 0)

{

return -1;

}

// Note: here you would compute or load the descriptors for both

// the scene and the model. It has been omitted here for simplicity.

// Object for pose estimation.

pcl::SampleConsensusPrerejective<pcl::PointXYZ, pcl::PointXYZ, pcl::SHOT352> pose;

pose.setInputSource(model);

pose.setInputTarget(scene);

pose.setSourceFeatures(modelDescriptors);

pose.setTargetFeatures(sceneDescriptors);

// Instead of matching a descriptor with its nearest neighbor, choose randomly between

// the N closest ones, making it more robust to outliers, but increasing time.

pose.setCorrespondenceRandomness(2);

// Set the fraction (0-1) of inlier points required for accepting a transformation.

// At least this number of points will need to be aligned to accept a pose.

pose.setInlierFraction(0.25f);

// Set the number of samples to use during each iteration (minimum for 6 DoF is 3).

pose.setNumberOfSamples(3);

// Set the similarity threshold (0-1) between edge lengths of the polygons. The

// closer to 1, the more strict the rejector will be, probably discarding acceptable poses.

pose.setSimilarityThreshold(0.6f);

// Set the maximum distance threshold between two correspondent points in source and target.

// If the distance is larger, the points will be ignored in the alignment process.

pose.setMaxCorrespondenceDistance(0.01f);

pose.align(*alignedModel);

if (pose.hasConverged())

{

Eigen::Matrix4f transformation = pose.getFinalTransformation();

Eigen::Matrix3f rotation = transformation.block<3, 3>(0, 0);

Eigen::Vector3f translation = transformation.block<3, 1>(0, 3);

std::cout << "Transformation matrix:" << std::endl << std::endl;

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(0, 0), rotation(0, 1), rotation(0, 2));

printf("\t\tR = | %6.3f %6.3f %6.3f | \n", rotation(1, 0), rotation(1, 1), rotation(1, 2));

printf("\t\t | %6.3f %6.3f %6.3f | \n", rotation(2, 0), rotation(2, 1), rotation(2, 2));

std::cout << std::endl;

printf("\t\tt = < %0.3f, %0.3f, %0.3f >\n", translation(0), translation(1), translation(2));

}

else std::cout << "Did not converge." << std::endl;

}

For an alternative, check the pcl::SampleConsensusModelRegistration or the pcl::SampleConsensusInitialAlignment classes.

- Input: Points (model), Points (scene), Descriptors (model), Descriptors (scene), [Correspondence randomness], [Inlier fraction], [Number of samples], [Similarity threshold]

- Output: Aligned model, [Transformation]

- Tutorials: Robust pose estimation of rigid objects, Aligning object templates to a point cloud

- Publication: Pose Estimation using Local Structure-Specific Shape and Appearance Context (Anders Glent Buch et al., 2013)

- API: pcl::SampleConsensusPrerejective, pcl::registration::CorrespondenceRejectorPoly

- Download

Global pipeline

To be concluded... (work in progress)

Go to root: PhD-3D-Object-Tracking

Links to articles:

PCL/OpenNI tutorial 0: The very basics

PCL/OpenNI tutorial 1: Installing and testing

PCL/OpenNI tutorial 2: Cloud processing (basic)

PCL/OpenNI tutorial 3: Cloud processing (advanced)

PCL/OpenNI tutorial 4: 3D object recognition (descriptors)

PCL/OpenNI tutorial 5: 3D object recognition (pipeline)