RoCKIn2014Camp

From robotica.unileon.es

Proposal

We want to develop and deploy minimal functional abilities to be part of RoCKIn 2014.

- Navigation

- Mapping

- People recognition

- Person tracking

- Object recognition

- Object manipulation

- Speech recognition

- Gesture recognition

- Cognition

Robot

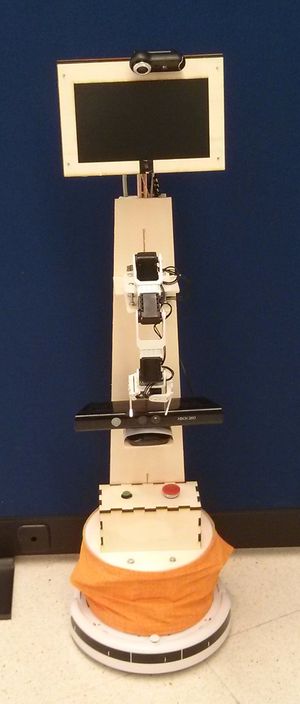

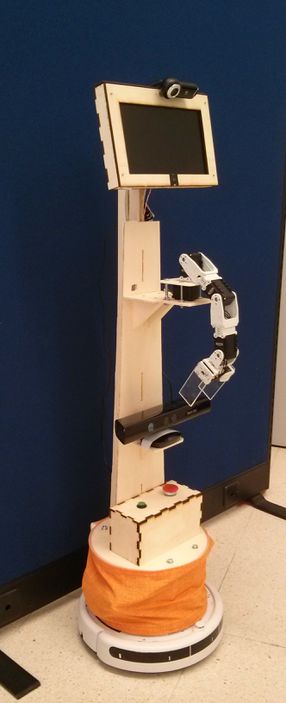

We want to take part in RoCKIn with the platform developed during the last two years in the Catedra Telefónica-ULE.

Robot Hardware

- iRobot Roomba 520

- Dinamixel Arm (5x12a)

- Wooden frame (yes, it is made of wood)

- Notebook (Atom processor) (the display has been taken apart from the main body)

- Kinect

- Arduino Mega

Robot Software

- ROS (robot control)

- MYRA (C/C++, ArUCo, Qt, openCV)

Project setup

We defined the development as four phases :

- Phase I: Initial Setup

- Phase II: Integration and architecture

- Phase III: Platform test

- Phase IV: Improvements and complex tasks

- Technical Challenge: Furniture-type Object perception

- Open Challenge: Exhibit and demonstrate the most important (scientific) achievements

Phase I: Initial Setup

Outline: Tasks developed in this phase

Phase II: Integration and Architecture

Outline: Tasks developed in this phase

Phase III: Platform test

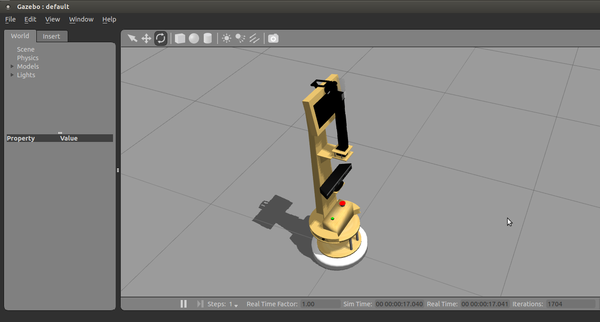

Simulator

WARNING: This part is still in Spanish, please feel free to ask if you have any doubt.

Hardware

<wikiflv width="300" height="250" logo="true">/videos/Test1.flv</wikiflv>

<wikiflv width="300" height="250" logo="true">/videos/Test2.flv</wikiflv>

Non-Critical (but to-do)

- Android/iOS Teleoperation

- Desktop Qt interface (WIP)

RoCKIn Camp 2014 - Summary

These were the highlight during the camp:

Migration to ROS hydro

Object Recognition

<videoflash>Ioz-x2JyxGQ</videoflash>

MoveIt!!

<videoflash>6GZv_pZFRYk</videoflash>