RoCKInChallengeToulouse

Contents

|

Sponsors and Acknowledgments

First of all we would like to thank the Spanish Ministry of Economy and Competitiveness for the partial support to this work under grant DPI2013-40534-R.

We want to show our appreciation to these companies for their support to watermelon team:

RoCKIn Project Description

This challenge focuses on domestic service robots. The project aims to create robots with enhanced networking and cognitive abilities. They should be able to perform useful tasks such as helping the impaired and the elderly (one of the main goals of our group).In the initial stages of the competition, individual robots will begin by overcoming basic individual tasks, such as navigating through the rooms of a house, manipulating objects or recognizing faces, and then coordinating to handle house-keeping tasks simultaneously, some of them under natural interaction with humans.

Watermelon Project: Team Description

- Project Codename

Watermelon Project

- Team Coordinator:

Vicente Matellán Olivera

- Team Members:

Technical- Manipulation/Grasping, Simulation: Fernando Casado Technical- SW Integration, Middleware, 2D Perception: Francisco Martín Rico Technical- SW Integration, HRI Dialogue, Team Leader: Francisco Lera Technical- Hardware: Carlos Rodríguez Technical- 3D Perception: Víctor Rodríguez

- Other Information:

Academic Year: 2014-2015 Repositories: https://github.com/Robotica-ule/MYRABot Tags: Robotics competitions, Feasible Assistive Robots Technology: ROS, PCL, c++, svn, OpenCV, cmake, OpenGL, Qt, Aruco, State: Development

Project Summary

Our challenge is to create a feasible platform able to take part in Robotics Competitions. We are working for integrating different hardware solutions in a DIY robot.

Robot

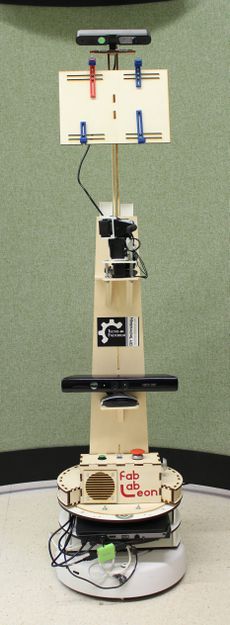

We want to take part in RoCKIn with the platform developed during the last two years in the Catedra Telefónica-ULE.

Robot Hardware

| Component | Model | Description |

| Frame | n/a | Poplar laminated wood |

| Computer 1 | Mountain F-13 | web |

| Computer 2 | Mountain Prototype | Touch Display, 360º open, intel i5. |

| Controllers | (a)Arduino 2560, (b)USB2serial | (a)arm, range sensors, (b)create |

| Base | Create (iRobot) | web |

| Ultrasound Sensors | Maxsensor mb1220 (x5) | Range: 7 meters |

| RGB sensor | Logitech | Webcam |

| RGBD sensors | Kinect, Asus Xtion | |

| Battery | standard | 12V, 7A |

| Arm (Actuator) | Dinamixel AX12 servos (x5) | Joints and servomotors from Bioloid |

Robot Software

| Option | Control Software | Version | Description |

| Robot (Simulator available) | Gazebo and ROS | Gazebo 1.19, ROS - HYDRO |

Competition Benchmarks

It is possible to take part in 6 different benchmarks. We have focused our work in 4 of them described in the next sections.

TBM2 - Welcoming Visitors

Description

Lab Test

Toulouse Test

FBM1 - Object Perception

The goal is to analyse the capabilities of a robot in processing perception sensor data in order to extract information about observed objects.

Lab Test

Toulouse Test

FBM2 - Object Manipulation

This functional benchmark is focused at assessing the capabilities of a robot to correctly operate manual commands of the types that are commonly found on domestic appliances operated by humans as light switches.

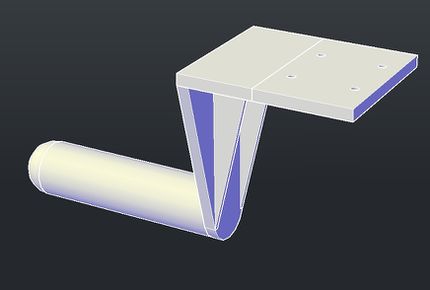

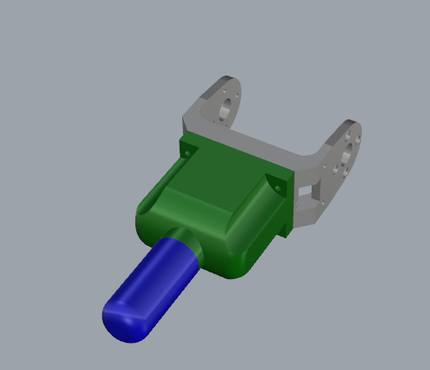

Modelling

Model 1

Model 2

Final Prototype

Lab Test

<videoflash>hDI7Yruv-u4</videoflash>

Toulouse Test

FBM3 - Speech Understanding

The goal of this functional benchmark is to evaluate the ability of a robot to understand speech commands that a user gives in a home environment.

Lab Test

Toulouse Test

Mountain Prototype

Thanks to our sponsor MOUNTAIN, we have developed a light version of MYRABot. This robot runs using the Mountain prototype which has a touch screen. The main feature of this prototype is the ability of its display for rotating 360 degrees. It can flips around and the prototype can be transformed into a tablet.